The human writes a Markdown file. The AI runs 100 experiments overnight. The bottleneck isn’t compute, it’s your program.md.

Karpathy open-sourced autoresearch: an AI agent that runs ~100 ML experiments on a single GPU overnight. The human never touches the code, just a Markdown file. From Ralph Wiggum bash loops to Gas Town’s 30-agent factory, the pattern is the same: design the arena, let AI iterate.

Andrej Karpathy’s new autoresearch repo has exactly three files that matter. One is fixed. One is the agent’s domain. The third is yours, and it’s a Markdown document.

“`

You write program.md, a plain-text file that tells an AI agent how to think about research. The agent edits the training code, trains a small language model for exactly five minutes, checks the score, keeps or discards the result, and loops. All night. Without you.

“`

What Karpathy Actually Built

The quiet genius is the fixed 5-minute wall-clock training budget. It doesn’t matter what the agent changes: a new architecture, a different optimizer, a different batch size. Every run gets exactly 5 minutes and gets judged on the same metric, val_bpb (validation bits per byte), which is vocabulary-size-independent so architectural changes get fairly compared.

“The idea: give an AI agent a small but real LLM training setup and let it experiment autonomously overnight. It modifies the code, trains for 5 minutes, checks if the result improved, keeps or discards, and repeats. You wake up in the morning to a log of experiments and (hopefully) a better model.”

— karpathy, GitHub – karpathy/autoresearch: AI agents running research on single-GPU nanochat training automatically · GitHub (github.com)

That’s about 12 experiments per hour. Roughly 100 overnight. The agent works on a git feature branch, accumulating commits as it finds better settings for the neural network, the optimizer, all the hyperparameters.

Three files. prepare.py handles one-time data prep and is never modified. train.py contains the full GPT model, optimizer (Muon + AdamW), and training loop, all in ~630 lines of code, and the agent edits it freely. program.md is where the human shapes the agent’s research strategy. That’s the entire system.

The program.md is already “90% AI written I ain’t writing all that,” Karpathy says. Even the instructions that guide the agent are partially written by AI.

The Prologue

Karpathy included a fictional prologue in the repo, dated March 2026:

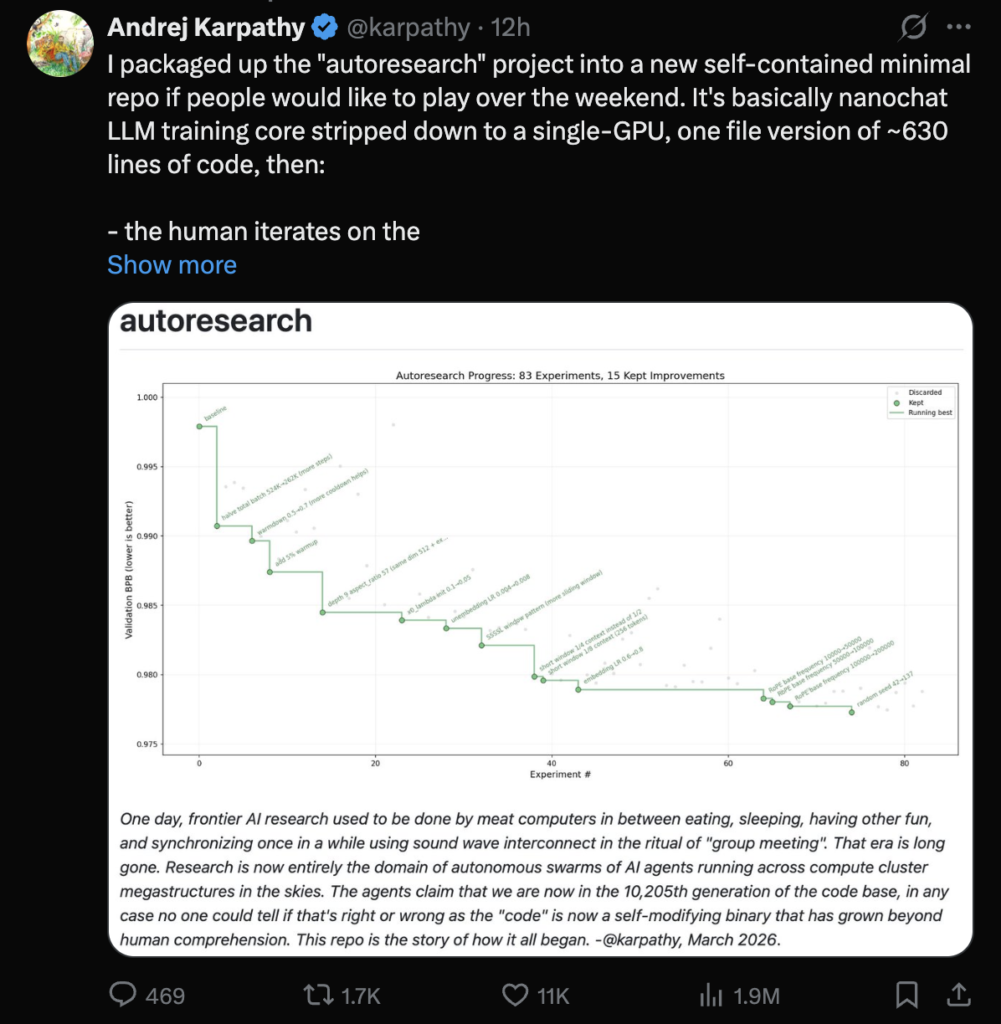

“One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun, and synchronizing once in a while using sound wave interconnect in the ritual of ‘group meeting’. That era is long gone. Research is now entirely the domain of autonomous swarms of AI agents running across compute cluster megastructures in the skies.”

— karpathy, GitHub – karpathy/autoresearch: AI agents running research on single-GPU nanochat training automatically · GitHub (github.com)

He means us. He means group meetings. He wrote it in past tense, from the perspective of a fictional future, and then published it in the present. That’s either a joke or a manifesto. Possibly both.

He’s also running the bigger cousin on 8xH100 with production nanochat:

<blockquote class=”twitter-tweet”><p lang=”en” dir=”ltr”>I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:<br><br>- the human iterates on the… <a href=”https://t.co/3tyOq2P9c6″>pic.twitter.com/3tyOq2P9c6</a></p>— Andrej Karpathy (@karpathy) <a href=”https://twitter.com/karpathy/status/2030371219518931079?ref_src=twsrc%5Etfw”>March 7, 2026</a></blockquote> <script async src=”https://platform.twitter.com/widgets.js” charset=”utf-8″></script>

276 experiments, 29 kept improvements. “I’ll just leave this running for a while.” No postdoc checking in at midnight. No lab meeting to sync on results. The machine runs, the git history accumulates, the model improves.

From Vibe Coding to Autonomous Research

The timeline tells the story. February 2025: Karpathy fires off a throwaway tweet that accidentally names “vibe coding.” It lands on his Wikipedia page. February 8, 2026: he proposes “agentic engineering,” noting that “you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight.” March 7, 2026: he releases autoresearch, where the human doesn’t even orchestrate. The human writes a Markdown file. The agent experiments indefinitely.

You write code. Then you tell AI to write code. Then you tell AI how to think about writing code. Each step removes one layer of human involvement.

The best labs won’t just have the most compute. They’ll have the best program.md.

A grad student running one experiment per day is competing against a single GPU running 100 experiments overnight with no breaks, no forgetting, no lab politics. 1,500 stars and 172 forks in the first days after release.